AI Agents Don't Just Change How You Code — They Demand a New Kind of Company

The Quiet Disappointment of "AI Adoption"

Almost every company I speak with has "adopted AI." Engineers are using Claude Code, Cursor, Copilot, Codex. Designers are in Figma with AI plugins. Marketing teams have entire workflows built around generative tools. On paper, the revolution has arrived.

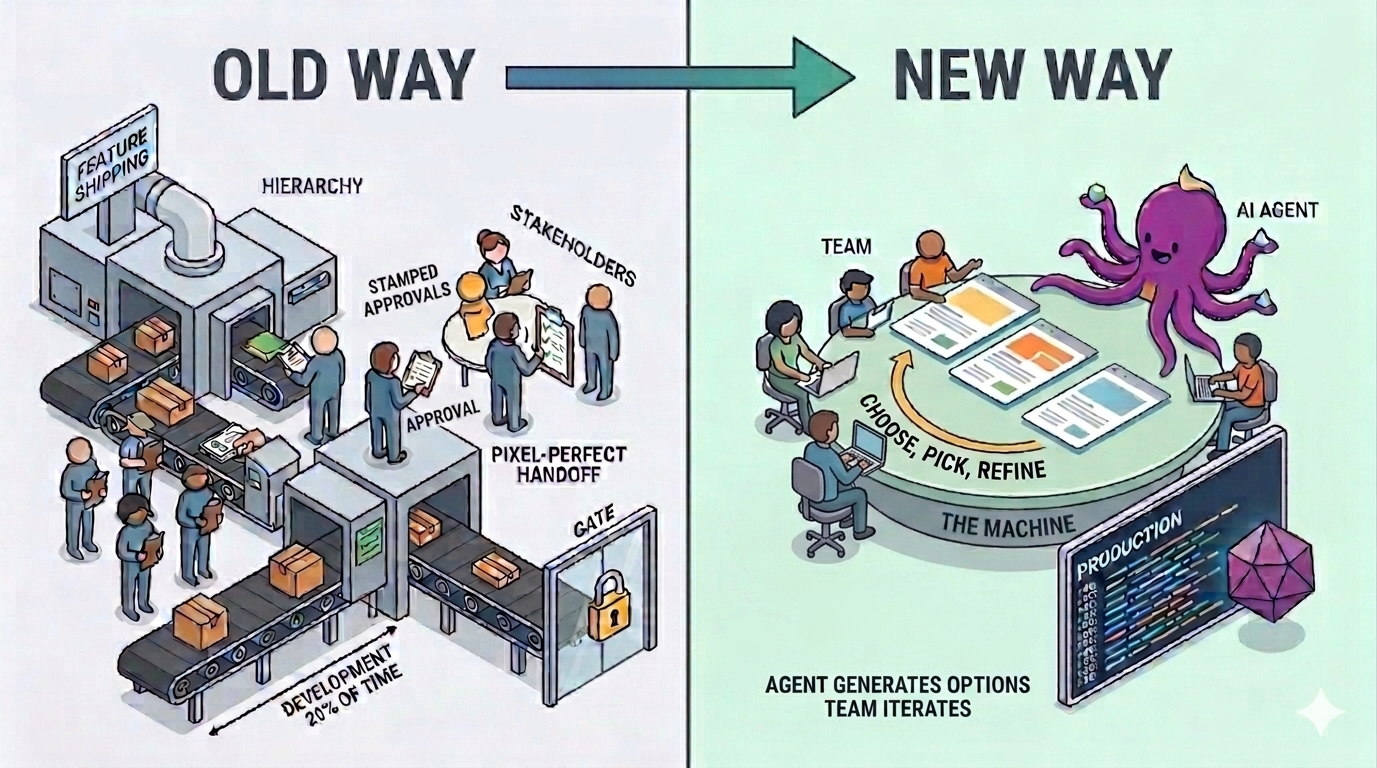

And yet, something is off. Recently I asked a senior executive at a large software company whether their development was now going several times faster. I expected at least a careful "two or three times." The answer was no. Not two times. Not even close. He then added the sentence that has stayed with me since: "Development is only about 20% of the time it takes to ship a feature. Even if you make that part infinitely fast, the whole system isn't much faster."

That is the story of AI adoption right now. Companies plugged AI agents into individual seats, but they did not touch the scaffolding around them — the stages, the approvals, the handoffs, the committees. The work still moves through the same pipes. The pipes themselves are the bottleneck.

Faster Tools in a Slower System

Imagine a factory where every worker suddenly gets a tool that is ten times more productive. If the conveyor belts still run at the old speed, if every part still needs six signatures to move forward, if QA still happens at the end in a single batch, the factory does not produce ten times more. It produces roughly the same, with more people waiting between stations.

That is modern software development in 2026. The coding step has genuinely collapsed. A feature that once took a week can often be drafted in an afternoon. But the surrounding choreography — the kickoff meetings, the design reviews, the stakeholder approvals, the pixel‑perfect handoff, the QA cycle, the release train — is untouched. So the feature still takes a month. The AI did its job; the company did not.

The industry keeps measuring the wrong thing. We compare lines of code per hour, or pull requests per engineer. The real question is how long it takes for an idea, a customer insight, or a competitive threat to become a shipped feature in the hands of users. That number, for most companies, has barely moved.

Rethinking the Design‑Then‑Development Handoff

Consider one of the most sacred sequences in product work: design first, then development. Designers create a spec. Developers implement it pixel‑perfect. Back‑and‑forth for edge cases. Another round of review. Eventually it ships.

This sequence made sense when implementation was expensive. You did not want engineers guessing. You wanted them cutting once, after measuring twice. Design was the cheap stage; development was the costly one, so you resolved ambiguity before writing code.

AI agents invert this economics. Implementation is no longer the expensive stage. Generation is nearly free. And yet we continue to hand agents a frozen design and ask them to faithfully translate it. We are still optimizing for a scarcity that no longer exists.

A better approach: describe the intent, the constraints, and the brand guardrails, then ask the agent to generate three full, working, interactive pages. Not mockups. Not prototypes. Real pages, wired to the backend, navigable in a browser. Then, as a team, you look at the three options and pick the one that actually feels right. You iterate from inside the working product, not from a static file.

Notice what just happened. You skipped a whole stage. The separate "design approval, then build" loop collapsed into a single "explore, pick, refine" loop. The code is already written — not because you rushed it, but because producing it was cheap enough that generating three variants cost less than arguing over one. Interaction is no longer something you negotiate through screenshots; it is something you experience directly.

Letting the Agent Surprise You

There is a second, subtler shift. The traditional handoff assumes the human knows the right answer and the implementer is there to reproduce it. But AI agents do not merely reproduce — they propose. Sometimes what they produce is worse than what you had in mind. Sometimes it is roughly equivalent. And sometimes, honestly, it is better.

The discipline we need is the ability to look at what an agent built and ask two separate questions: Is this actually worse than what I wanted, or is it just not what I imagined? Those are not the same thing. The first is a real problem. The second is ego dressed up as taste.

Companies that treat every deviation from the brief as a defect will grind to a halt correcting things that were never actually broken. The ones that treat deviations as candidate improvements — evaluated on their own merit, not on their fidelity to the original mental image — will move dramatically faster and, more often than not, arrive somewhere better than they originally planned.

Flatten the Company or Lose the Race

None of this works if the process wrapping the product is still hierarchical. If every change still needs to climb a ladder of approvals — product manager, design lead, engineering lead, VP, exec sponsor — the agent's speed is wasted waiting in queues. The agent can refactor your entire system over lunch. Your Slack thread to get sign‑off will still take three days.

Dynamic, flat companies are about to run circles around large, permission‑heavy ones. Not because the engineers are smarter, but because the distance between a good idea and a shipped feature is short. When you combine genuine AI superpowers with a small, empowered team that does not need committee approval to try something, you get cycle times that used to belong to demo‑day prototypes — but with production quality.

This is uncomfortable for established organizations, because the approval chains were not accidents. They were scar tissue from real incidents. Someone shipped something dangerous, so we added a review. Someone broke production, so we added an approval. Every gate has a story behind it. Removing them feels reckless.

But those gates were designed for a world where mistakes were hard to detect and expensive to reverse. That is not our world anymore.

The New Checks and Balances

Flattening a company does not mean removing discipline. It means moving the discipline to where it actually protects you.

The new center of gravity is simple: nothing should be breaking. Not "nothing should be unfamiliar." Not "nothing should deviate from last year's patterns." Nothing should be breaking. That is the invariant worth defending.

How do you defend it when code is flowing in from AI agents ten times faster than before? Not with more human reviewers in the loop — that is exactly the pipe that is already clogged. You defend it with systems that run at the same speed as the agents:

- Unit tests that actually assert behavior, not placeholder shells. When an agent changes a function, the tests catch regressions before the PR is even reviewed.

- Integration tests that exercise real flows — real database, real services, real boundaries. Mocked integrations pass while production burns. Real ones tell the truth.

- CI/CD that runs automatically and refuses to merge anything that breaks the invariants.

But tests and CI/CD only tell you whether the code behaves the way its author expected. They do not tell you what is actually happening in production — under real load, with real users, with real data no test fixture would think to invent. That is where logs come in, and it is where most teams underinvest most badly.

Logs are the ground truth of a running system. They record what the code actually did, not what it was supposed to do. In the old world, logs were mostly for humans — a developer grepping through files after something had already gone wrong. In the AI‑agent world, they become something far more powerful: the substrate agents reason over. Feed production logs into an agent and it can tell you which errors share a root cause, which users are hitting which failure paths, which feature you shipped yesterday quietly broke something you did not notice. The loop closes: the system reports on itself, the agent reasons over that report, and problems surface before a human has to spot them. This is exactly the gap we are building Shipbook to close — turning logs from an afterthought into the ground‑truth layer that both humans and AI agents depend on.

When all of this is in place — tests, CI/CD, and a genuine ground‑truth layer from production — something remarkable happens. The speed at which you can try new features, validate them under real usage, and ship them becomes enormous. Not because you removed the checks, but because the checks now run at machine speed instead of meeting speed.

Staying Ahead of the Curve

The companies that will lead the next decade are not the ones with the most AI licenses. They are the ones that used AI adoption as a reason to rebuild how they work.

That means fewer stages and more loops. Fewer approvals and more guardrails. Less "pixel perfect from spec" and more "pick the best of three working options." Less deference to what the brief said, more willingness to recognize that the agent's suggestion might actually be better. A flatter structure. A deeper investment in tests, observability, and logs as the real safety net.

AI agents are an enormous gift. But a gift you do not unwrap is just clutter. If your company still looks and moves the way it did in 2022, you have not really adopted AI — you have just bolted it onto a machine that was not built for it. The teams that redesign the machine itself are the ones who will still be here, shaping the market, when the rest are wondering why their impressive tools never quite delivered.