The Bug Hiding in Our Logs That AI Almost Helped Us Miss

A unit test was timing out on my laptop. The kind of small annoyance you push to next week. I asked Claude to look at it.

A couple of hours later we had a fix. We also had something I did not expect: confirmation that the same bug, in a slightly stricter form, had been firing 1.3 million times a day in production for the past day and a half — silently, in a fire-and-forget error path nobody was watching.

The fix is uninteresting. The way we got there is the part worth writing about.

The Hypothesis That Sounded Small

While Claude was poking at the local failure, it offered a guess about the production impact. The buggy code path was reached only by a couple of background jobs, it said. Bounded scope. The kind of thing you fix in the next deploy window, not at midnight.

It was a confident answer. Two callers. Job tier. Done. Claude did add a passing line at the end — "worth grepping production logs to confirm" — but it landed the way most footnotes land: as a thing you nod at and move on from. The whole framing pointed away from urgency.

I almost moved on. The fix was ready, the tests were green, and there was nothing in the model's analysis that suggested otherwise. What I did instead — and this is the only thing I want to take credit for in this whole story — was not accept the confident answer. I told Claude to actually check. Pull the production logs. See if the bug was, in fact, contained.

That single sentence is the difference between this story and the much more boring version of it. Claude had given me a tidy diagnosis. The diagnosis was wrong. And the only reason I knew to push back is that I have learned, slowly and at some cost, that "bounded scope" answers from a confident model are exactly where I should be most suspicious. Not because the model is reckless. Because confident reasoning in the absence of data is just well-formed guessing.

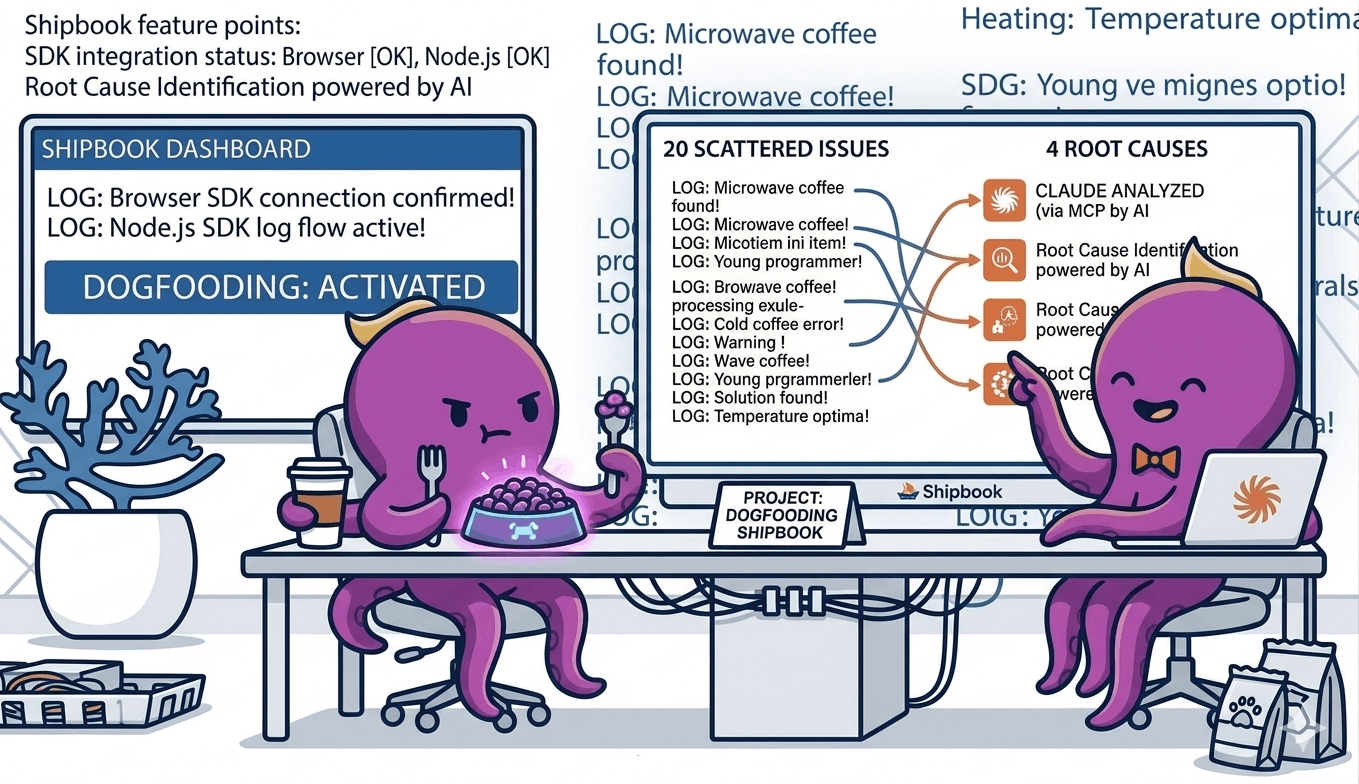

So Claude called the Shipbook MCP and pulled a seven-day histogram of the relevant error.

The histogram looked like a wall. Six errors a day. Six. Four. Three. Six. Then a cliff. Then 1.3 million.

A number that high cannot come from a job that runs every five minutes. The original "background jobs only" story was wrong, and it was wrong by an order of magnitude that none of us — engineer or model — would have inferred from the code alone. The cliff also lined up exactly with a constant in our own code that had switched on stricter behavior in our new infrastructure. The story was no longer "small bounded bug." It was "we have been silently breaking writes to a whole cluster since the moment we deployed that constant, and we did not know."

The MCP did not just confirm a hypothesis. It overturned a benign one and replaced it with a real one.

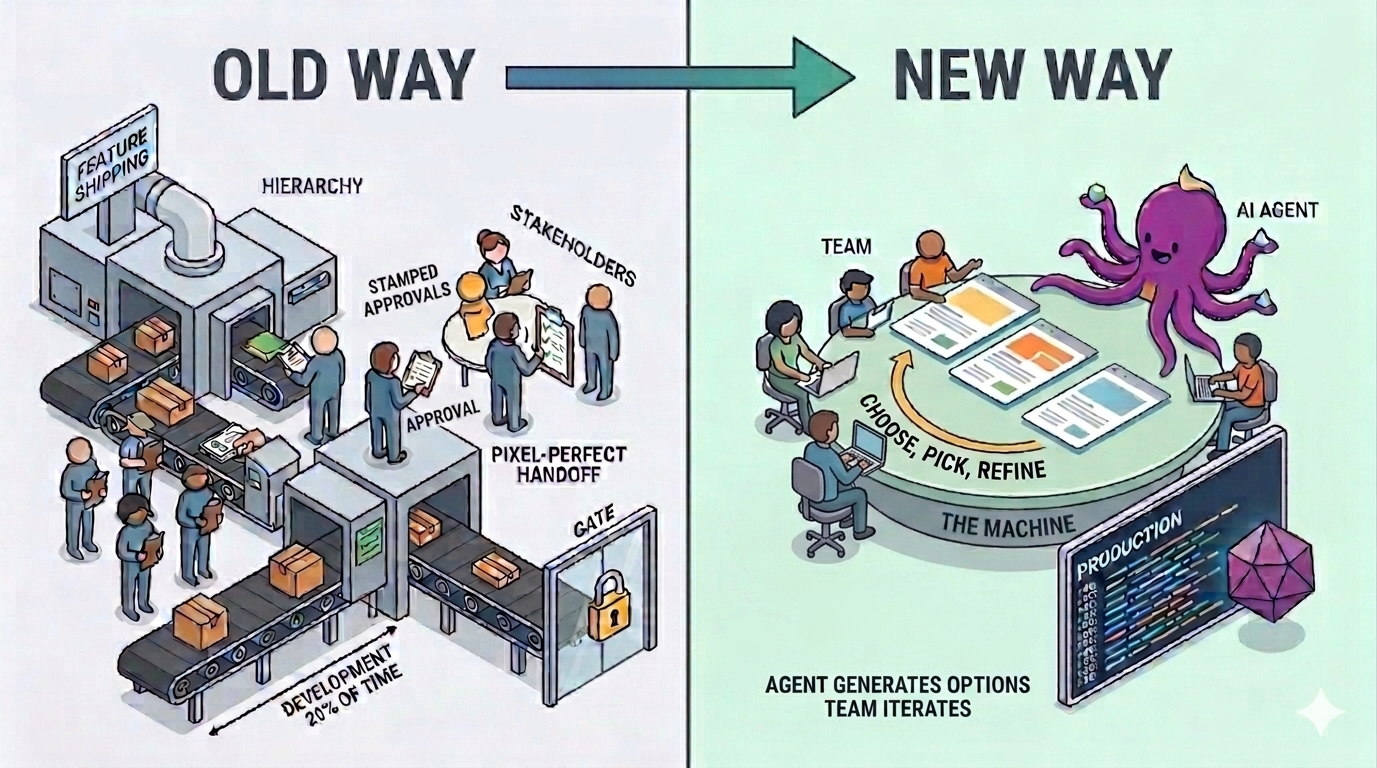

What the Tight Loop Actually Buys You

I want to be careful about what is new here, because plenty of tools claim some version of "AI debugs production." Most of them are wrong.

What is new is not that an AI can look at logs. It is that the model can stay inside its hypothesis-and-test loop without leaving the conversation. The engineer's job, in that loop, is no longer to fetch data. It is to decide which questions are worth asking. The model handles the rest.

The volume on that histogram did not just upgrade the priority of the bug. It corrected the model. With the number on the table, Claude re-read the code, found a caller it had missed — on the request path of every active session — and revised its picture of the situation. The interesting thing is not that the model corrected itself, but what corrected it. It was not better reasoning. It was a number that did not fit the story. A model that cannot see production will keep telling stories that do not survive contact with it. A model that can see production gets caught when it is wrong, and updates.

This is the loop we are betting on: the model proposes, the data disposes, and both stay in the same conversation while it happens.

Two Bugs for the Price of One

While we were already inside the data, we noticed something we had not gone looking for. The first bug had been masking a second one. A customer we had recently promoted to a higher tier was supposed to have their traffic distributed across several pieces of dedicated infrastructure. Because of a related routing decision, all of their traffic had been piling onto one of those pieces, with the others sitting empty.

We would have caught this eventually. Probably the next time someone looked at a latency dashboard and noticed an asymmetry. Probably weeks from now. We caught it today, because we were already there. It cost us one extra question.

This is, I think, an underrated effect of having a low-friction loop. When the cost of looking is low, you look more. When you look more, you find things you would not have specifically gone hunting for. Cheap curiosity compounds.

Logs Nobody Queries Are Not Insight

The bug that bled 1.3 million times a day was logged faithfully. Every single error was caught, formatted, written out — and ignored. It was not in a dashboard. It did not page anyone. The error path was deliberately fire-and-forget, because the alternative would have been a request-blocking failure mode that we did not want.

A log no one queries is closer to a tree falling in an empty forest than to information. It exists, but it does not act on the world. Most production systems are full of these. We have built a generation of observability tools that are excellent at producing logs and mediocre at producing attention.

The interesting question is not "how do we collect more logs." It is "how do we make the logs that already exist legible to the people, or the agents, who can do something about them."

Ground Truth and the Model

There is a thing AI systems are bad at, which is making confident claims about specific facts they cannot verify. There is a thing they are good at, which is reasoning over data once that data is in front of them.

The trick is to put the data in front of them. Not as a paragraph in a prompt — as a tool call. Not as yesterday's snapshot — as live state. The model should not be guessing what production looks like; it should be looking.

That is what an MCP server does. It turns whatever capability you wire up — logs, in this case — into a function the model can call when it decides it needs the answer. The model's reasoning is no longer floating; it is anchored to whatever ground truth you have given it access to.

We have spent the last few months wiring our own product into our own conversations. Our logs sit a tool call away from Claude. So do our deployments, our error analytics, our session traces. The result is not magic. It is something quieter and more useful: an engineer and an agent, looking at the same reality at the same time, and reasoning about it together.

The Footnote That Matters

There is one more thing worth saying, because it is the most honest part of the story. No end user was affected by any of this.

The cluster where the writes were failing was the new one — the secondary side of an in-progress migration. Reads were still being served from the original cluster, which was healthy and complete. The 1.3 million errors a day were silently accumulating inside our own infrastructure, on a side of the system no customer was reading from. To anyone using Shipbook today, everything looked fine. It was fine.

The damage was strictly future damage. The day we cut over reads to the new cluster, every one of those failed updates would have surfaced as a missing field, a stale session, a piece of context that should have been there and was not. The bug was a quiet, slow accumulation of data drift that would have detonated the moment we trusted the new cluster.

I almost did not include this section because it felt like deflating the drama. But it is actually the point. The most valuable bugs to catch are the ones that are not yet on fire — the ones that are loading their fuel quietly, behind a flag, on a path nobody is yet looking at. Those are the ones that turn into postmortems six months later, when the team has forgotten the context and the data is unrecoverable.

We did not catch a customer-facing crisis today. We caught the seed of one, while it was still small enough to fix in an afternoon. That is the kind of catch a tight loop with production data makes possible. Not heroic firefighting. Just earlier noticing.

The Quiet Lesson

I keep coming back to one thing. The bug we found today was not a hard bug. It was a small, bounded, fixable mistake — the kind of thing any engineer would have spotted in five minutes if they happened to be looking at the right log line.

Nobody was looking. Nobody was going to look. It would have hidden until the migration finished and the damage became visible.

The thing that broke this pattern was not a smarter model or a better dashboard. It was that the model could ask the question, and the answer was right there, in the same conversation. That is the loop we are betting on. It feels small from the inside. From the outside, I think it is going to look like a quiet shift in how production systems are kept healthy.

A flaky test on a Wednesday afternoon is not where I expected to start writing about that. But here we are.